1. Introduction

Prior research suggests that survey design can influence responses (Stark et al. 2020; Tourangeau et al. 2000), though the extent that abortion attitudes are fixed or malleable in response to in-survey context effects remains underexplored. Factors such as wording, framing, or question order may shape how people evaluate the morality, legality, and policy regulation of abortion (Bumpass 1997; Schuman et al. 1981). We examined whether, beyond potential measurement error, survey content might influence abortion attitudes.

Context Effect

Participants interpret and respond to questions based on the general context of a survey and specific questions previously asked (Holyk 2008; Schwarz and Strack 1991). The impact of survey content on participants’ responses is known as “context effects”[1] and is unavoidable. Survey questions activate information in respondents’ memories that can influence interpretations and responses to subsequent questions. This effect can result from both the order in which questions are asked and the question wording.

Researchers have implemented different strategies to measure context effects. Regarding abortion attitudes, Schuman et al. (1981) examined context effects by comparing responses to identical general questions across two surveys. In one survey, the general question followed more specific abortion-related items, resulting in more permissive attitudes toward legal abortion. Previous research has also investigated various methods for assessing abortion attitude malleability during survey completion. One approach involves presenting an informative statement before reiterating a previously asked question.[2] This study takes a different approach and asks the same survey item at the beginning and end of the survey. Contrasting self-reported information on surveys by repeating an item is a common practice for assessing validity (Goerman et al. 2018).

Theories of Attitudinal Change

Scholars have theorized about whether individual attitudes are fixed or malleable. Two main models of attitudinal change include the active updating model and the settled disposition model. The active updating model emphasizes that people’s attitudes and behaviors are continuously shaped by exposure to new information (Kiley and Vaisey 2020). From this perspective, education and life experience can lead to shifts in abortion attitudes via increased information (Bueno et al. 2023; Currier and Carlson 2009).

In contrast, the settled dispositions model argues that one’s value systems are largely formed through early-life socialization and remain stable over time (Kiley and Vaisey 2020; Peterson et al. 2020). From this perspective, attitudes on sensitive issues, such as abortion, are expected to exhibit stability over time (Baumgartner et al. 2008). We explored whether the active updating model plays a more significant role than the settled dispositions for people’s abortion attitudinal survey responses.

Current Study

Do survey items immediately influence people’s attitudes toward abortion? We used a concurrent mixed-methods design to examine whether and why English- and Spanish-speaking respondents changed their answers to a survey question on abortion legality while completing a web-based survey. Quantitatively, we assessed patterns of response change across two identical items presented at the beginning and end of the survey. Qualitatively, we analyzed open-ended responses of participants whose answers differed to uncover and interpret the reasons behind these changes. We also studied patterns by survey language, as prior research indicates Spanish-speaking participants express more conservative views than English-speaking participants (Bueno et al. 2025; Solon et al. 2022).

Beyond potential measurement error, our analysis explores observed differences in light of context effects as some respondents explicitly attribute changes in their views to the survey content, and we assume that respondents’ accounts of why they answered the way they did are reasonably reliable.

2. Data and Methods

Data. In 2021, we conducted a web-based survey examining abortion legality/illegality with a sample of 2,489 U.S. adults using Qualtrics’ national panels (2,204 English-speaking, 285 Spanish-speaking). We set quotas for the main sociodemographic characteristics.[3] This study received approval from the Institutional Review Board at Indiana University.

Measures. We examined how participants answered an identical question, placed at the beginning and end of the survey. The item asked: Do you think abortion should be… 1) Legal in all cases; 2) Legal in most cases; 3) Illegal in most cases; 4) Illegal in all cases. Between the two items, participants answered questions on the consequences of abortion legality, attitudes toward abortion laws, views on abortion in specific circumstances, and perceptions of responsibility and penalties for illegal abortion. At the end of the survey, respondents also completed an open-ended item: In the first part of the survey, you said abortion should be [initial response]. At the end of the survey, you said abortion should be [final response]. Can you tell us why your answer did or did not change?

Analysis. We used a Stuart-Maxwell chi-square test[4] to quantitatively assess absolute changes in responses. We also classified changes based on their direction: shifts toward more restrictive vs. more permissive attitudes and magnitude of change by measuring the distance between response categories across the two observations.[5]

Open-ended responses were coded in two phases by three authors, two of whom are bilingual (English and Spanish). In the first phase, one coder developed an initial codebook, which was reviewed and refined collaboratively by all coders. This codebook was used for initial coding. Next, we collaboratively and iteratively reviewed and refined the codebook further, finalizing it for subsequent coding,[6] resulting in a total of 23 codes[7] (Table A1; Appendix). Code application was not mutually exclusive. In the second phase, we conducted a sub-analysis of the code “survey effect.” This sub-analysis comprised 77 open-ended responses and yielded nine distinct subcodes.

3. Changes in Attitudes toward Abortion Legality

What percentage of people changed their answers during the survey?

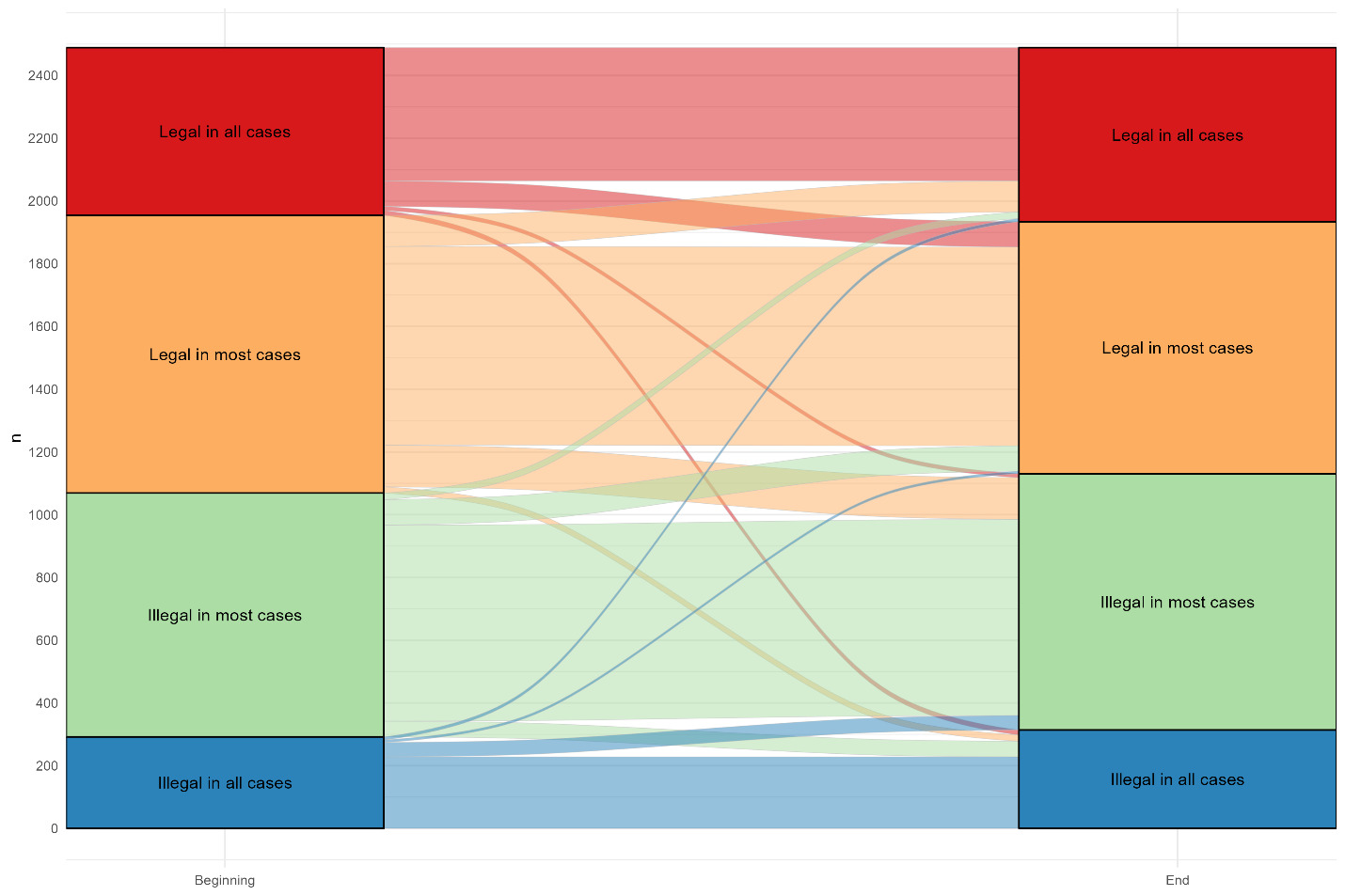

There was a significant association for direction and magnitude of change for the abortion attitude question: χ²(3) = 18.54, (p < .01). Table 1 and its alluvial plot show the distribution of responses to the abortion legality question asked at both the beginning and end of the survey. Nearly one-quarter (23.2%) changed their response, with a significantly larger percentage exhibiting more restrictive responses (12.6%) compared with more permissive shifts (10.6%; Table 2). The most stable group favored abortion being illegal in most cases (80.4%), while the least stable initially indicated abortion should be legal in most cases (71.5%). Post-hoc pairwise comparisons indicated a significant shift from “legal in most cases” to “illegal in most cases” (Table 1; χ2(1) = 12.64, p < .002), with the next largest shift being from “legal in most cases” to “illegal in all cases,” χ2(1) = 5.14, (p < .07). Importantly, category distances were not necessarily symmetrical. For example, moving from illegal in all cases to illegal in most cases is not necessarily the same magnitude as moving from legal in most cases to illegal in most cases. The direction of the differences indicates response shifts were not symmetrical, with the largest shifts occurring across legal-illegal category boundaries (15.4% shifting from legal in most cases to illegal in most cases compared with 10.4% moving in the opposite direction).

The language in which respondents completed the questionnaire was also associated with response patterns (Table 2). The proportion who changed responses was significantly higher among Spanish-speakers—39.6% relative to 21.1% for English-speakers.[8] Among English-speakers, changes were minimal, with 10.1% shifting toward greater permissiveness and 11% toward more restrictive responses. In contrast, Spanish-speakers exhibited more disparate shifts, with 14.7% moving toward greater permissiveness and 24.9% toward greater restrictiveness.

Table 2 also shows the magnitude of change among respondents who gave different answers to the two questions. Most shifts involved one category (≈10%), compared with two-category (1.2–1.3%) or three-category changes (0.4–0.6%). Spanish-speaking respondents showed larger shifts: among one-category changes, 20.4% moved toward greater restrictions versus 10.5% toward greater permissiveness, compared with 9.3% and 8.9% for English-speakers, respectively. Larger proportions of Spanish-speakers also changed by two (3.5–3.9% vs. 0.8–1%) and three categories (0.4–1.1% vs. 0.4–0.6%).

Why did people change their answers during the survey?

Immediately after the final question on abortion legality, participants explained why their responses remained the same or changed. Of respondents who changed (23.2%, n = 578), 13.3% (n = 77) attributed their shift to the survey itself. Table 3 summarizes their reasoning, distinguishing those who moved toward more permissiveness (n = 29) from those who moved toward more restrictiveness (n = 48).

Respondents who shifted toward more permissiveness often said the survey made them realize abortion is a personal choice or prompted deeper reflection on the issue. For example, one participant moving from legal in most cases to legal in all cases noted that imagining hypothetical situations reinforced their belief in personal choice:

As I am reading some of these questions, they have me putting myself in these situations, and how I would feel if this was put upon me. Now, I do agree it should be legal in all cases. I may not agree with the reason why each person chooses abortion, but the choice should be legal.

Another reflected that the survey made them consider situations they had not thought of: “Because the survey made me realize there were other situations where it might be necessary for abortion.” Similarly, some participants explicitly stated the survey itself changed their views. One respondent who moved from legal in most cases to legal in all cases simply remarked: “I changed my mind during the survey.” A few participants noted that the survey made them recognize the complexity of the issue. One who shifted from illegal in most cases to legal in most cases said: “Because I didn’t realize how complex this issue is at first.”

Respondents who shifted toward legality in fewer cases also cited the survey as prompting deeper reflection with opposite outcomes. For example, one participant moving from legal in all cases to legal in most cases said, “Due to the questions, I reflected on my thoughts,” while another shifting to illegal in most cases, emphatically stated, “It changed because of your survey!!” Many in this group highlighted disagreement with specific circumstances for abortion, such as one participant who moved from legal in most cases to illegal in most cases: “After seeing some of the reasons why a woman might get an abortion, I felt they were not all good enough reasons. A lot of them seemed selfish.” Others noted unawareness of certain reasons, like: “Because I wasn’t aware that some people seek abortions simply because the gender of the child is not what they desired.”[9]

4. Discussion

In this study, we explore whether and why participants changed their responses to an abortion legality survey item while completing a web-based survey.

Directionality, Magnitude, and Reasoning of Attitudinal Change

Overall, we found significant change with 23.2% shifting their views on abortion legality, and more people moving toward more restrictive (12.6%) than more permissive (10.6%) attitudes.[10] The responses with greatest change were those of participants who initially stated abortion should be legal in most cases. Approximately 28.5% changed their views compared with 19.6–21.9% from the other three groups. Yet, attitudinal change occurred in both directions. Paired with evidence from the open-ended responses, these findings suggest that respondents, particularly those with some degree of uncertainty, may shift their attitudes when confronted with the multifaceted dimensions of abortion (Hans and Kimberly 2014). Our qualitative analysis highlights that empathetic responses often emerged as participants recognized the complexity of the issue and acknowledged abortion as a deeply personal choice. At the same time, deeper reflection on the issue could also reinforce more restrictive views. The survey’s specific content—punishment attitudes for illegal abortion (hypothetical at the time)—may have contributed to attitudinal shifts.

Yet, participants’ open-ended responses centered more around abortion circumstances than around punishment when referring to the effect of the survey questions. Specifically, a context effect emerged with the inclusion of the gender-selection circumstance,[11] which some respondents disagreed with in their open-ended responses. As the active updating model of attitudinal change suggests (Kiley and Vaisey 2020), exposure to detailed information can prompt reconsideration of existing views, a dynamic that can operate in both directions. Our findings indicate that deeper engagement with diverse circumstances surrounding abortion (e.g., health-related, social-related reasons) played a central role in shaping respondents’ attitudes. Yet part of the measure’s low reliability may stem from its forced-choice format rather than from context effects alone. Because the item lacks a midpoint, respondents with moderate or ambivalent views must select a more polarized option, which can increase response instability even when their underlying attitudes remain unchanged. Importantly, the lack of reliability resulting from shifts in response to this question is not entirely attributable to measurement error as there were people who provided responses indicating engagement with survey questions potentially impacted their response, and at least 3% of the total sample (13.3% of those who changed their answer) explicitly expressed the effect of the survey content.

Attending to the magnitude of change, most changes were not drastic—typically shifting by only one category and toward greater restrictions. However, it is notable that among participants who shifted their answers by two or three categories, shifts from illegality to legality were more common than the reverse. In other words, the percentage of participants who initially answered illegal in all cases and shifted to legal in all/most cases (6.2%) was higher than those who began with legal in all cases and shifted to illegal in all/most cases (5.4%) (Table 1).

Survey Language

We also observed significant differences in the direction and magnitude of attitudinal change depending on survey language. Specifically, Spanish-speaking respondents tended to shift toward more restrictive views. This pattern is consistent with prior research indicating that Hispanic/Latinx U.S. adults often express more conservative attitudes toward abortion than non-Hispanic/Latinx (Branton 2007; Bueno et al. 2025). It is also plausible that many of the Spanish-language respondents were Spanish-dominant speakers—or even monolingual and foreign-born—which aligns with previous findings that first-generation Latinx migrants in the U.S. tend to hold more traditional views on abortion than second and later U.S.-born generations (Bueno et al. 2024).

Limitations

This study has practical limitations. First, as discussed earlier, the abortion legality question did not include a neutral response option, thus, the extent that context effects may influence ambivalent or undecided attitudes toward abortion (il)legality is unclear. This key limitation represents a likely source of unreliability that, within this study design, cannot be disentangled from context effects.[12] In fact, the bidirectional shifts we observed may indicate that the lack of reliability in the measure might carry more weight than true context effects. Second, open-ended responses were generally brief and offered limited insight regarding participants’ reasoning for change. Third, some response shifts may have resulted from external factors unrelated to context effect, including self-reported human error, as revealed by some open-ended responses annotated in Table A1.

5. Conclusion

Overall, most respondents maintained consistent answers, and when changes occurred, they were generally modest, often shifting by only one category. While not discarding measurement error, our mixed-methods findings suggest a potential context effect in almost a quarter of the sample, with 3% of the entire sample explicitly attributing a change in their answers to the survey content. Exposure to abortion-related content can encourage either more restrictive or more permissive attitudes when respondents are confronted with the issue’s multidimensional aspects (e.g., specific circumstances or potential punishments). However, there was a meaningful difference based on survey language: Spanish-speaking respondents more often shifted views toward more restrictive abortion attitudes.

From a methodological standpoint, our findings highlight the value of combining repeated questions with open-ended follow-ups in survey research. Reiterating a question allows researchers to detect shifts in responses over the course of a survey, providing insight into the stability and malleability of attitudes. Pairing this approach with open-ended items further illuminates the reasoning behind these shifts, revealing whether changes reflect genuine reconsideration, deeper reflection prompted by survey content, or other factors such as misreading or misunderstanding. This mixed-methods approach both improves our understanding of context effects and offers a practical tool for survey designers to identify potential biases and enhance the validity of survey measures. Incorporating such strategies can be particularly useful for sensitive or complex topics, such as abortion, where attitudes may be nuanced and influenced by the framing or ordering of questions. Overall, our results underscore the need to be attentive to all questions asked on a survey and the benefits of integrating qualitative insights into quantitative survey designs to capture the processes underlying attitude formation and change.

Corresponding author contact information

Kristen N. Jozkowski, PhD

School of Public Health, 1025 7th Street, School of Public Health Building, Indiana University, Bloomington, Indiana, 47405

knjozkow@iu.edu