Introduction

Survey research guidelines have long discouraged explicit inclusion of traditional opt-out response options such as “Don’t Know” (DK) in self-administered, telephone, or in-person surveys (Krosnick 1991; Schuman and Presser 1996). For instance, in the latter, interviewers are trained not to volunteer a DK option and to only record it as such if a respondent independently offers a DK (Schuman and Presser 1996). Even then, interviewers are instructed to follow a DK (and other “no opinion” answers) with probes such as “take your time” or “what is your best guess?” Indeed, Schuman and Presser (1996) conceived of DKs as “momentary hesitancies, evasions, or signs of ambivalence.” They further characterize DKs as “missing data” that lowers the size of the analytic dataset and reduces data quality (Schuman and Presser 1996). Similarly, Krosnick (1991) argues that no opinion responses, of which DK is one, are associated with “question ambiguity, satisficing, intimidation, and self-protection” (Krosnick 1991, 2002). Research indicates that selection of a DK response is associated with lower education level, later positioning of survey items, item repetition, survey mode, and use of incentives (Ferber 1966; Francis and Busch 1975; Krosnick 2002; Schuman and Presser 1996). Only more recently, arguments have been made that DK responses can actually be meaningful and improve item reliability and validity, particularly in the context of knowledge-based items and that they should not be treated as missing values (Bishop 2005; Luskin and Bullock 2011; Manisera and Zuccolotto 2014; Tourangeau, Maitland, and Yan 2016).

Our research team conducted a study of medical oncologists with the principal objective to learn about their attitudes, beliefs, and practices regarding the role of medicinal cannabis (MC) in cancer care. At the time of the survey, MC remained federally illegal, even as roughly half of the states in the United States identified cancer as a qualifying condition for MC. Furthermore, a significant disconnect exists between the scientific evidence base for MC in oncology, which remains sparse, and the increasingly permissive legal landscape. We conducted cognitive interviews with five oncologists about the clarity and appropriateness of our draft survey questions. Through cognitive testing, we learned that in the setting of a contradictory and confusing legal landscape and the relative lack of conclusive scientific evidence, oncologists were unsure in their MC knowledge and practices. For this reason, DK options were deliberately added to certain items in the survey. Here we explore whether DK responses should be treated as missing data or whether they may be considered valid response options. We hypothesized that, in the context of this highly educated population (physicians) and a confusing MC legal and scientific landscape, DK responses enhanced data quality with regard to knowledge-based attitudes, beliefs, and practices.

Methods

In late 2016, we surveyed by mail a nationally representative sample of 400 medical oncologists drawn from SK&A healthcare databases. The draft survey included items from existing surveys, as well as newly generated items. We refined the survey based on five cognitive interviews with oncologists from states permitting/not permitting MC. In response to several draft questions, the subjects who participated in the cognitive testing expressed that their experience with MC was limited, either due to their legal setting or due to not having sufficient knowledge about MC. A multidisciplinary team with expertise in survey methods, oncology, palliative care, psychiatry, and substance use disorders processed the cognitive interview data and deliberately added opt-out response options to three single-item questions and three batteries of questions. The DK opt-out was always the last presented response option and formatted in italics for visual differentiation. The final questionnaire was anonymous, took approximately 10 minutes to complete, and had 27 items (inclusive of batteries of questions) evaluating cannabis knowledge, attitudes, and practices. A $50 cash incentive was included in the initial mailing, and nonrespondents received a reminder postcard and telephone call, followed by a final mailing. This study was approved by institutional review boards at the Dana-Farber Cancer Institute and the University of Massachusetts Boston. Full methodological details are presented in greater detail elsewhere (Braun et al. 2018).

Survey Items that Included a DK Response Option

To assess cannabis’ comparative effectiveness, we asked, “B3. Compared to treatments you typically use, how would you rate the effectiveness of medical marijuana for the following cancer-related conditions?” Conditions included nausea/vomiting, pain, poor appetite/cachexia, depression, anxiety, poor sleep, and general coping. Responses included “much more effective,” “somewhat more effective,” “equally effective,” “somewhat less effective,” “much less effective,” and “I do not know.”

Since preclinical studies indicate that cannabis/cannabinoids appear to slow the growth of cancerous tumors, we asked, “B4. To what extent do you think medical marijuana has antineoplastic effects? Response categories included"to a great extent,” “to some extent,” “to a very little extent,” “none at all,” and “I don’t know.”

We examined oncologists’ beliefs about the risks of cannabis relative to prescription opioids with: “B6. In your opinion, how do the risks of medical marijuana use compare to the risks of prescription opioid use?” Risks listed included paranoia/psychosis, anxiety, depression, confusion/impaired mentation, falls, driving difficulties, addiction, and overdose death. Response categories included “much higher than opioids,” “somewhat higher than opioids,” “comparable with opioids,” “somewhat lower than opioids,” “much lower than opioids,” and “I do not know.”

To learn about oncologists’ views about beneficial properties of cannabis for different types of patients, we asked, “B8. In your opinion, how often is medical marijuana beneficial for (a) those near the end of life, (b) those with early stage cancer, (c) cancer survivors, (d) children under 18 years old, and (e) the elderly?” Response options included “never beneficial,” “rarely beneficial,” “sometimes beneficial,” “usually beneficial,” “always beneficial,” and “I don’t know.”

To evaluate perceived modes of preferred MC delivery, we asked, “B9. What mode(s) of medical marijuana use do you prefer for your oncology patients?” Response categories included “smoking,” “vaporizing,” “ingesting orally,” “no preference,” “I do not support medical marijuana use of any sort,” and “I don’t know.” Subjects were asked to mark all that apply.

To assess views about the optimal composition of MC, we asked, “B10. Marijuana contains more than 60 active compounds. In general, do you prefer marijuana strains rich in tetrahydrocannabinol (THC) or cannabidiol for oncology patients?” Response options included “rich in tetrahydrocannabinol (THC),” “rich in cannabidiol,” “rich in both,” “no preference,” “I do not support medical marijuana use of any sort,” and “I don’t know.”

The questions that, based on the cognitive interview data, did not require DK responses included items such as: How concerned are you that smoking marijuana could increase the risk of infection in immunocompromised oncology patients? (Very concerned/Somewhat concerned/A little concerned/Not at all concerned); From which of the following sources do you get the majority of your information on medical marijuana? (Peer-reviewed sources/Non peer-reviewed sources/Dispensaries/ Patients and families/Other sources).

Predictors

Prespecified predictor variables included personal characteristics such as age, gender, and race/ethnicity; medical practice characteristics such as whether an oncologist treated adults, children, or both; whether they practiced in a state permitting MC (based on self-report); cancer patient volume; and whether they viewed themselves as having sufficient knowledge to make MC recommendations (based on a Yes/No question “Do you feel you have sufficient knowledge about the medicinal use of marijuana to make recommendations to oncology patients?”).

Analyses

To examine possible associations between DK responses and independent variables (such as respondents’ demographic characteristics, type of professional practice, and whether they believed themselves to have sufficient knowledge to make MC recommendations), we looked at DK data for each question on the survey that included a DK option. For each of the battery items, we computed two variable types — “everDK,” which selected respondents who marked DK for at least one question in the battery (i.e., B3everDK, B6everDK, and B8everDK) and “allDK,” which selected respondents who marked DK for all the questions in the battery (i.e., B3allDK, B6allDK, and B8allDK). We cross-tabulated each “everDK” variable; each “allDK” variable; and each single DK item (B4DK, B9DK, and B10DK) with respondent background characteristics and the sufficient knowledge variable (B11), and for each crosstab, we ran a Pearson chi-square test.

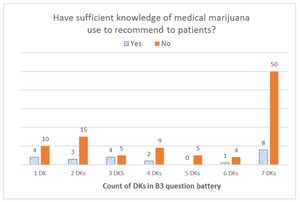

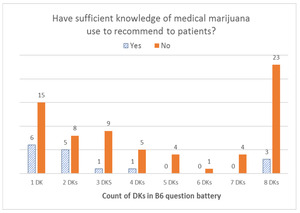

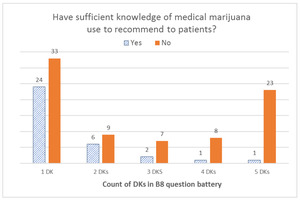

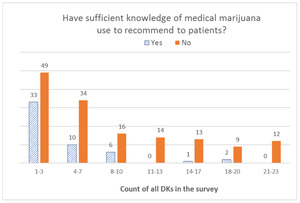

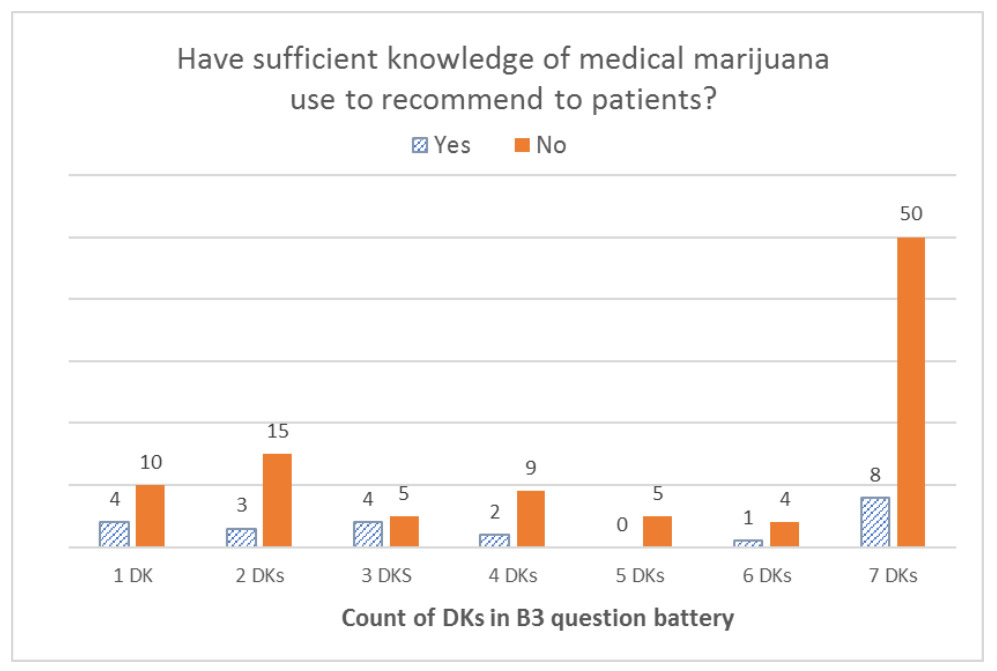

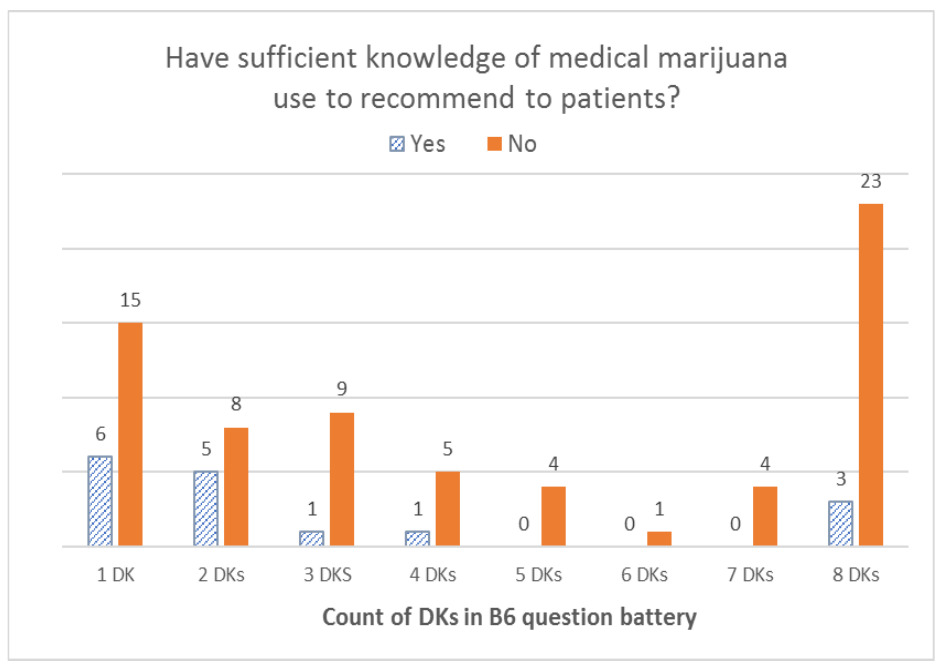

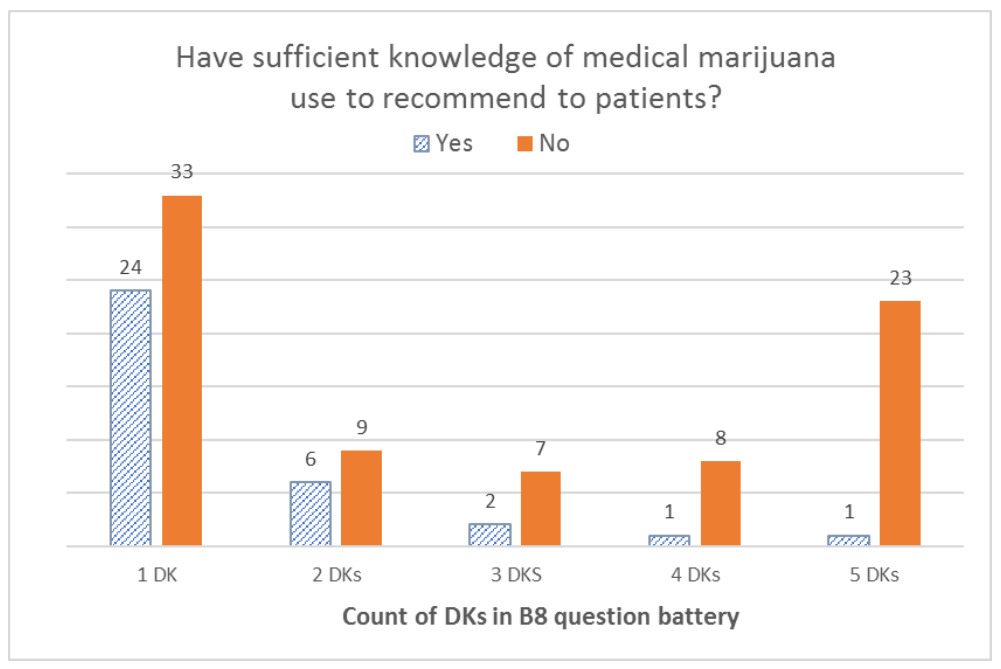

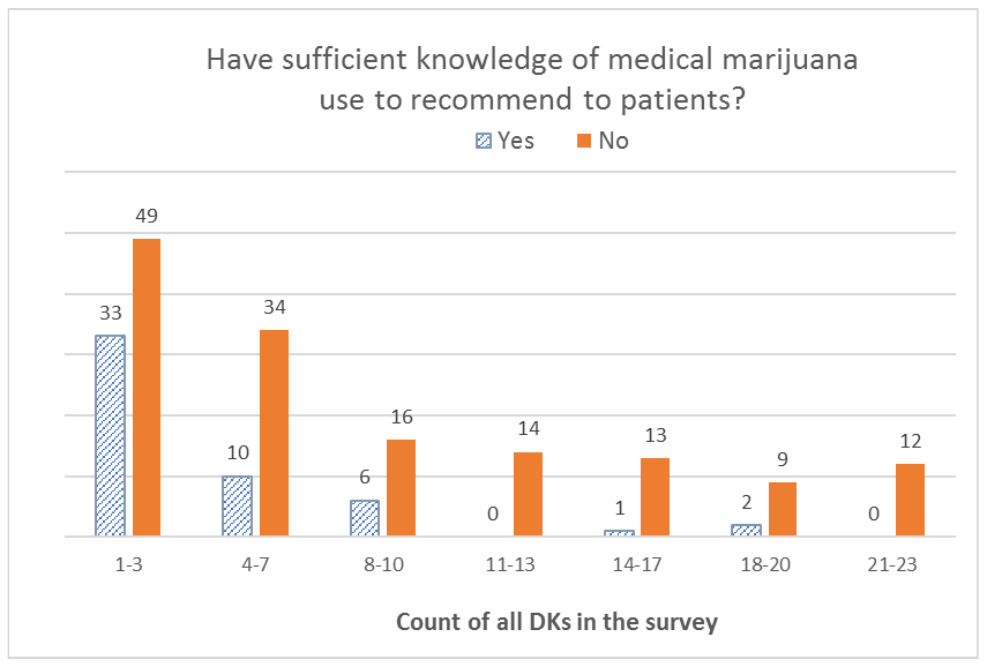

To look at the distribution of DK responses within dichotomous responses (Yes/No) to the sufficient knowledge question, we constructed bar graphs for the sum of DK responses in each battery item (B3, B6, and B8), as well as for the sum of all DK responses in the survey. We also ran the logistic regressions for each of the nine variables and controlled for all predictor variables as specified previously. Finally, we conducted a Mann-Whitney test to compare medians for all DK responses within Yes and No responses to the sufficient knowledge question. All analyses were carried out using IBM SPSS V.20.

Results

The results of the overall survey are presented elsewhere (Braun et al. 2018). In brief, of 400 initially sampled oncologists, 237 of the 376 eligible respondents completed the survey, yielding a final response rate of 63.0% (AAPOR RR4[1]). The majority of respondents were male (65.0%), white, non-Hispanic (57.0%), and practicing in a state that permitted MC (53.2%) (see Table 1 in Appendix). Seventy percent of all respondents believed that they did not have sufficient knowledge about MC to make recommendations.

With the exception of B8everDK (MC for different types of patients), we found that B3everDK; B6everDK; each of the “allDK” variables; and each of the single items (B4DK, B9DK, and B10DK) were significantly associated with the sufficient knowledge question. The B6everDK question (risks of MC vs. opioids) was significantly associated with respondents’ sex and race. Respondent characteristics such as sex, age, and race were not associated with other DK responses. Type of practice (non-hospital vs. hospital) was significantly associated with three DK responses, and whether the physician’s state of practice permitted MC were significantly associated with several DK responses. These associations are presented using crosstabs/Pearson chi-square tests (p<0.10) in Table 2 in Appendix. The logistic regressions for each of the nine variables found that having sufficient knowledge was the only variable that was significant across six of the nine regressions and that the direction was consistent with the bivariate findings (see Table 3 in Appendix).

When we looked at the distribution of DK responses among respondents who reported not having sufficient knowledge to recommend MC to their patients, we found that the overall distribution was left-skewed for the B3 battery (effectiveness), with the highest number of respondents selecting DK for all seven items in the battery. With the B6 battery (risks), the overall distribution was U-shaped, with the highest peak on the right (for all eight items) and another peak on the left (for only one item). For the B8 battery (type of patients), the distribution was a bimodal U-shape, with the greatest number of respondents offering only one DK answer and a second peak for those who offered DK answers for all five items in the battery. Finally, the overall distribution was right-skewed for all DK responses combined, with the right tail (more DKs) being much longer than the left tail (fewer DKs); that is, most respondents gave one or a few DK responses, and fewer respondents gave a DK response to all items. For additional details, see Figures 1–4.

Finally, we conducted the Mann-Whitney test to compare medians for all DK responses within Yes/No responses to the sufficient knowledge question. We found that those who reported not having sufficient knowledge had a median of seven (range from one to 23) DK responses, compared with a median of two (range from one to 20) among those who reported having sufficient knowledge. The Mann-Whitney U value was 2261.000, and it was highly significant (p<0.001).

Discussion

Findings from a national survey of medical oncologists about their knowledge, beliefs, and practices regarding MC appear to contradict the long-held survey research position that opt-out response options invariably increase satisficing, in other words accepting the most readily available option as satisfactory. In response to cognitive interview feedback during survey design, a DK answer option was included in six out of the 27 items.

Lines of evidence indicate that the use of these DK options by respondents appear to be thoughtful and truly reflective of their knowledge and experience, as measured by consistency with responses to other survey items. Some of this evidence is based on participants’ responses to a Yes/No question: “Do you feel you have sufficient knowledge about medicinal use of marijuana to make recommendations to oncology patients?” Respondents practicing in states without comprehensive MC laws (who were thus likely to have had less exposure to medicinal use of cannabis than their colleagues in states with MC laws), and who reported not having sufficient knowledge, tended to appropriately select DK response options. Similarly, the response bar graph for a battery of questions on the effectiveness of MC for several symptoms was overall left-skewed, and those who viewed themselves as lacking sufficient knowledge to make MC recommendations were much more likely to respond with DK to all questions in the battery as compared with colleagues who perceived themselves as having sufficient MC knowledge.

Even beyond the sufficient knowledge question, additional lines of evidence support the notion that DKs appear to be thoughtfully chosen to truly reflect a lack of confidence regarding knowledge of MC. In a battery of questions that asked how often participants believed MC to be beneficial for various populations of patients (pediatric patients, the elderly, those near the end of life, those during early phase cancer, and cancer survivors), the response bar graphs were U-shaped with a high proportion of respondents who replied DK to one or all of the B8 questions, suggesting that respondents provided DK answers either for a patient population they do not treat (e.g., children if they only treat adults) or that they do not have sufficient knowledge to respond across patient groups. The majority of DKs were given to the question about the benefits of MC for children, offered by medical oncologists exclusively treating adults. Similarly, the bar graph for a battery of questions comparing the risks of MC to those of prescription opioids (B6) was overall U-shaped, however with considerably more variation than for the B8 battery. Although we cannot be certain as to why this increased variability existed, we can posit that oncologists may have felt better informed on the latter than on the former.

Certainly, these analyses have limitations. The items that included DK options were selected deliberately rather than randomly, based on the input we received from the cognitive testing respondents, who felt that they had limited knowledge or experience to answer the specific questions. If the DK options were assigned randomly, our analysis would allow for comparison of nonresponse rates between similar questions with and without opt-out options. Nonetheless, we believe our findings are important and meaningful. Also, while we conducted the multivariate analysis to control for covariates, the findings of the logistic regression are limited given the relatively small sample size (n=237). Also, the findings of the bivariate analyses (Pearson chi-square tests and Mann-Whitney tests) and multivariate analysis (logistic regressions) lack the adjustment for multiple testing (e.g., Bonferroni correction). To address potential concerns regarding the generalizability of our findings, we examined how survey respondents compare with the entire eligible sample. Based on the limited information we had about the entire sample (gender, region, and subspecialty), we found that the demographic characteristics of respondents were not more than three percentage points different from the characteristics of the entire eligible sample. We believe that differences of these sizes would have an insignificant effect on the answers to the selected questions.

There is a known relationship between unit and item nonresponse (Yan and Curtin 2010). However, given that we had a reasonably high survey response rate (63%), we believe that respondents were genuinely interested in our survey and that DKs in selected questions are informative and should not be considered missing data. We believe that DKs were meaningful responses reflecting a genuine lack of knowledge and experience with MC that improved the quality of our data. With emerging therapies or controversial topics, future studies will likely survey physicians about their opinions regarding new treatments, for which they might have more or less knowledge and experience. Including a question that asks whether they have sufficient knowledge to recommend such treatments may improve the quality of the survey data and also provide additional opportunities to analyze the relationship between their assessed knowledge and validity of DK responses.

Acknowledgments

This research was supported by the Hans and Mavis Lopater Foundation.

Corresponding Author:

Dragana Bolcic-Jankovic, PhD

University of Massachusetts Boston, Center for Survey Research

100 Morrissey Blvd. Boston, MA 02125

E-mail: dragana.bjankovic@umb.edu

Phone: 617-287-6692