Introduction

The World Trade Center Health Registry (WTCHR) was established in 2002, forming a longitudinal cohort of survivors of the 9/11/2001 terrorist attacks in New York City. More than 71,000 enrollees have been surveyed about their 9/11-related short- and long-term health effects in four major surveys (i.e., waves 1 – 4) as well as in smaller, focused in-depth surveys. From September 2017 until March 2018, a subset of enrollees was invited to participate in the Health & Employment Survey (HES). Using data and metadata from the HES, we sought to understand survey mode preferences (i.e., paper vs. web) and patterns of response among enrollees. This information may be utilized in future surveys to boost completion rates, optimize sampling strategies, and use resources most effectively.

Web surveys have become more acceptable to participants in recent years (Beebe et al. 2018). Researchers often prefer to use web surveys for data collection because they offer numerous benefits: faster response times (Hardigan, Popovici, and Carvajal 2016; Kwak and Radler 2002; Burgess, Nicholas, and Gulliford 2012), better data completeness and higher data quality (Jiang et al. 2017; Kwak and Radler 2002; Manfreda et al. 2008), no printing (Ardalan et al. 2007) or mailing expenses (Kaplowitz, Hadlock, and Levine 2004; Saleh and Bista 2017), allowance for self-pacing (Jiang et al. 2017), and perceived privacy while answering sensitive questions (Jiang et al. 2017). Web survey drawbacks include consistent findings that response rates are typically the lowest for web surveys compared with other modes (Van Mol 2017; Funkhouser et al. 2014; Kwak and Radler 2002; Manfreda et al. 2008; Sax, Gilmartin, and Bryant 2003; Sinclair et al. 2012). This is not the case for all survey populations, however (Jiang et al. 2017). Also, lower response rates for web surveys may be more likely among one-time survey respondents compared with panel respondents (Manfreda et al. 2008).

Paper surveys have strengths and limitations as well. Results from some studies have shown that older populations, including clinicians, tend to be more responsive to paper surveys (Hardigan, Popovici, and Carvajal 2016; Ernst et al. 2018). A key strength is that response rates for paper-only surveys are nearly always higher than those for web-only surveys (Sinclair et al. 2012; Fan and Yan 2010). Limitations to paper surveys are reflected in the benefits of web surveys discussed previously.

When different modes have similar response rates or similar volumes of response, mixed-mode methodology becomes a good option (Hagan, Belcher, and Donovan 2017; Funkhouser et al. 2014; Jiang et al. 2017; Dykema et al. 2013). A study by Hagan, Belcher, and Donovan (2017) found that among their sample population of cancer patients, more vulnerable patients tended to prefer paper surveys over web surveys. The researchers concluded that it was important to offer the paper survey as an option, particularly for the more vulnerable (e.g., older, non-White, less educated, and less wealthy) population (Hagan, Belcher, and Donovan 2017). Mixed-mode surveys also tend to have higher response rates than single-mode surveys (Beebe et al. 2018; Millar and Dillman 2011; Rubsamen et al. 2017).

Web surveys that use email invitations as their method of deployment also enable the researchers to send email reminders throughout the data collection period. Reminders for paper surveys can increase labor and costs for the research team, while email reminders tend to be easy to send and cost-effective. Reminders also increase the likelihood of web survey response (Cernat and Lynn 2018; Van Mol 2017; Goritz and Crutzen 2012). According to results by Svensson et al. (2012), those who received 7-to-9 email reminders had the highest response rate compared with individuals who received fewer or more reminders. They also noted that they received little negative feedback about the recurrent reminders (Svensson et al. 2012). Most researchers suggest using a limited number of reminders (Saleh and Bista 2017; Van Mol 2017; Sanchez-Fernandez, Munoz-Leiva, and Montoro-Rios 2012), as sending too many may overwhelm or bother the survey respondents (Goritz and Crutzen 2012).

There were multiple aims of this study. (1) We were interested in whether survey mode was related to survey completion, predicting that odds of completion would be greatest for enrollees offered both modes. (2) We examined demographic differences in the HES for paper respondents compared with web respondents and earlier respondents versus later respondents. Our expectation was that web respondents and earlier respondents were more likely to be young, white non-Hispanic, with greater educational attainment and household income versus paper respondents and later respondents. (3) We sought to determine whether web survey respondents were responsive to up to twelve email reminders. We hypothesized that there would be no noticeable increases in survey response after six-to-eight email reminders.

Methods

Data Source & Sampling

The HES was an in-depth survey conducted during September 2017 – March 2018 on a sample of enrollees from the WTCHR. A total of 71,426 individuals are enrolled in the WTCHR, and all have been invited to participate in the major wave surveys: wave 1 (2003–2004), wave 2 (2006–2007), wave 3 (2011–2012), and wave 4 (2015–2016). The sampling strategy for the HES reflected the survey’s focus on retirement patterns and related health conditions; those who reported being retired or unemployed for health reasons at any point during the waves 2–4 surveys were invited to participate as well as a sample of age-matched controls (Yu, Seil, and Maqsood 2019). The sample pool included those who were under the age of 75 years, English-speaking, and had completed both wave 1 and wave 2 surveys. A total of 22,795 enrollees were invited to participate in the HES, and 14,887 enrollees completed the survey (completion rate = 65%). This study sample total differs from a previously published number because our sample was limited to enrollees who had a valid mailing address or email address on file (Yu, Seil, and Maqsood 2019).

Survey Deployment & Communication

The HES was offered in two modes: paper and web. Enrollees with email addresses in our system were invited to participate via web; others were invited to participate via paper survey sent through the mail. After the first invitations were sent, those invited to complete the web survey received a number of email reminders to fill out the survey if they had not yet done so. About ten weeks after the initial paper and web invitations, a second batch of paper surveys was mailed to enrollees who had not completed the survey, regardless of initial mode invitation. Therefore, some enrollees were able to participate via either mode. Email reminders continued to be sent about every one-to-three weeks to enrollees invited to participate in the web survey, and a third batch of paper surveys was mailed to nonresponders about 11 weeks after the second batch was sent. The final mailing of paper surveys and the final two email reminders introduced a monetary incentive (i.e., a $10 gift card) upon survey completion.

Statistical Analyses

We used logistic regression modeling to determine the likelihood of responding to the HES based on mode of invitation and demographic factors: gender; race/ethnicity; age group (based on age on 9/11/2017); household income; marital status; educational attainment; and enrollee eligibility group (i.e., rescue/recovery worker, resident, area worker, passer-by, or student/school staff).

We were also interested in potential demographic differences between earlier versus later respondents and paper versus web respondents. Earlier respondents were those whose surveys were received during September 2017 – November 2017; later respondents’ surveys were received during December 2017 – March 2018. We calculated descriptive statistics and conducted chi-square testing to assess any differences between these groups.

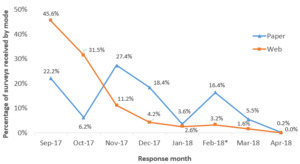

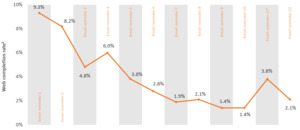

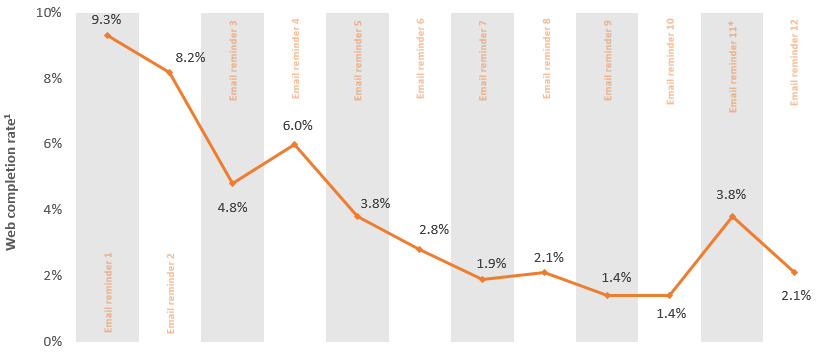

To illustrate the data collection volume by survey mode, we calculated response proportions for both paper and web surveys by month. We calculated completion rates following each of the 12 email reminders sent to enrollees invited to respond via web survey to understand whether numerous email reminders would result in continued survey response gains. The web completion rate was calculated by taking the number of web surveys received following an email reminder and prior to the subsequent email reminder, divided by the number of enrollees who received the reminder.

Results

Using logistic regression modeling to predict the likelihood of responding to the HES, we confirmed that survey invitation mode was an important predictor of survey response. Compared with those invited to participate with paper only, those invited to participate via web only were 7.0 times as likely (95% confidence interval [CI]: 6.01, 8.07) to respond to the survey (see Table 1); this is mainly due to the survey deployment, wherein nonresponders to the web invitations were later given the opportunity to respond via paper survey, leading to them being categorized with an invitation mode of both paper and web. Those invited to both modes were 2.3 times as likely (95% CI: 2.13, 2.43) to respond to the survey compared with the paper only group.

Other demographic factors were predictive of survey response as well. Black non-Hispanic (odds ratio [OR] = 0.74, 95% CI: 0.67, 0.82) or Asian enrollees (OR = 0.84, 95% CI: 0.71, 1.00) were less likely to respond to the survey than White non-Hispanic enrollees. Enrollees in older age groups were significantly more likely to participate in the survey than those in the 28–49 years age group (50–59 years: OR = 1.43, 95% CI: 1.27, 1.60; 60–64 years: OR = 1.82, 95% CI: 1.63, 2.04; 65+ years: OR = 1.91, 95% CI: 1.72, 2.13). Compared with the highest household income group, the lowest income group was less likely to participate in the survey (OR = 0.79, 95% CI: 0.68, 0.93). In contrast, the second highest income group was more likely to participate (OR = 1.14, 95% CI: 1.03, 1.26) compared with the highest income group. Compared with rescue/recovery workers, residents were much less likely to respond (OR = 0.67, 95% CI: 0.60, 0.74). We also found an interaction between race/ethnicity and income that suggested that Black non-Hispanic enrollees in the lowest two income groups were more likely to respond to HES than White non-Hispanic enrollees in the highest income group (not presented in Table 1).

We also made demographic comparisons between paper (N = 7,481) versus web (N = 7,406) and earlier (N = 10,715) versus later (N = 4,172) respondents. There were nearly equal numbers of paper and web responses to the HES (see Table 2). Paper respondents had lower household incomes than web respondents, with 22.3% having an annual household income under $50,000 compared with 15.0% of web respondents (p<0.0001). Web respondents were more likely to have a bachelor’s degree or higher than paper respondents (58.5% vs. 46.5%, respectively; p<0.0001). Among those who responded to the survey during the first three months of data collection, 65.7% were males; among those who responded to the survey later, 60.5% were males.

There was not a linear pattern to the volume of surveys received during the data collection period (see Figure 1). Paper surveys demonstrated volume increases following each of the three mailings. The majority of web surveys (77.1%) were received within the first two months of data collection. Both web and paper surveys showed an increase in surveys received during February 2018, when the monetary incentive was introduced to those who had not yet completed the survey.

Our data demonstrated that even after 12 email reminders, there were increases in web survey completions (see Figure 2). Completion rates ranged from 9.3% after the first reminder to 1.4% after the ninth and tenth reminders. Web survey completion rates more than doubled after the monetary incentive was introduced (3.8%) and remained elevated even after the final email reminder (2.1%).

Discussion

In this study, we were interested in learning more about differences in survey mode preferences and patterns, specific to the WTCHR enrollee population. There were equal numbers of paper and web responses to the HES. Nearly half of the total web surveys were received in the first month of data collection; over three-quarters were received during the first two months. Only 22% of paper surveys were received in the first month of data collection by comparison. Not only did web survey data come in faster than paper survey data, but statistical modeling showed that the likelihood of responding to the HES was more than doubled when the web survey was an option (compared with those only offered the paper mode). Enrollees who were male, older, and had higher household income were also more likely to respond. Compared with rescue/recovery workers, lower Manhattan residents were significantly less likely to participate in the HES.

We also wanted to consider the level of impact that up to 12 email reminders would have on survey response. The literature is mixed on how many email reminders survey participants should receive, though the guidance tends to be that fewer is better. In our study, there was a noticeable increase in returned surveys following each reminder. If we had offered the monetary incentive in an earlier email reminder, we may have gotten even more responses, but offering it earlier may have been prohibitively expensive, as the volume of both web and paper surveys received would have likely increased.

To understand the impact of survey mode patterns and preferences, we examined differences in demographic factors between paper versus web and earlier versus later respondents. Our data showed that web respondents had greater household incomes and level of educational attainment compared with paper respondents. Earlier responders to the HES were more likely to be male and have greater income levels compared with later responders. Because survey volume was evenly split by mode, we conclude that it is important to use mixed-mode methodology among our cohort.

As the enrollees are part of an ongoing, closed cohort, it is important to keep them engaged in the research process. Survey planning must balance the needs of the research team as well as the participants, with the ultimate goal of having high completion rates for WTCHR surveys, keeping in mind both time and resource considerations. The cohort’s unique composition could limit the generalizability of these results to other survey populations, but they would likely be of use to other long-running cohorts aiming to minimize loss to follow-up. The results may also be relevant to survey populations where the individuals have a shared experience in common (e.g., a natural disaster or pandemic).

Conclusions

Over 16 years following 9/11/2001, the WTCHR continues to maintain an active and responsive cohort of survivors. With consistent communication from the staff at WTCHR (e.g., annual reports, holiday cards, and website updates); assistance with finding survivor resources; and proven utility of survey data via scientific outputs, the WTCHR enrollees have continued their participation in the research at high levels. The use of a mixed-mode survey methodology was necessary in achieving a strong completion rate, demonstrated by the fact that half of the respondents chose to respond via paper, and half chose to respond via web. Because respondent demographics differed based on the mode of survey, offering both helps minimize response bias. That said, the cost differential by mode is significant; we estimated that each paper survey response cost about 16 times more than each web survey response. The cost, response time, and data quality benefits of web surveys (as well as the ability to send numerous email reminders), lead us to conclude that efforts must be made to collect email addresses for all WTCHR enrollees, as well as correct the numerous invalid email addresses in the system.

Funding

This publication was supported by Cooperative Agreement Numbers 2U50/OH009739 and 5U50/OH009739 from the National Institute for Occupational Safety and Health (NIOSH) of the Centers for Disease Control and Prevention (CDC); U50/ATU272750 from the Agency for Toxic Substances and Disease Registry, with support from the National Center for Environmental Health (CDC); and by the New York City Department of Health and Mental Hygiene (NYC DOHMH). Its contents are solely the responsibility of the authors and do not necessarily represent the official views of the NIOSH, CDC, or the Department of Health and Human Services.

Corresponding author information

Kacie Seil, MPH

30-30 47th Ave., Suite 414

Long Island City, NY 11101

718-786-4478