Introduction

As of publication of this report, there is approximately one mobile phone connection for each person on the planet (GSMA Intelligence 2014). While mobile phone ownership is higher in developed countries, mobile phone penetration in developing countries has increased substantially in recent years. In Kenya, for example, approximately 82 percent of people own a mobile phone (Pew Research Center 2014).

Dramatic increases in mobile phone ownership raise the prospect of SMS as a mode of data collection. SMS offers several potential advantages over other modes of data collection: it is inexpensive, may be easily automated, allows respondents to respond to questions at their own convenience, is often perceived as less intrusive than interviews conducted face-to-face or by telephone, and provides a convenient mechanism for incentivizing response through the delivery of talk time. Yet the use of SMS for data collection also suffers from several obvious disadvantages: questions and answers must fit into the space of an SMS, respondents must read and understand the questions themselves, and respondents do not have the opportunity to ask any clarifying questions.

In this study, we report results from two experimental surveys of users of Mobiles for Reproductive Health (m4RH), a SMS-based family planning information service in Kenya. The purpose of these experimental surveys was to assess whether SMS was a feasible mode for collecting data from users for an impact evaluation of m4RH. Prior attempts to directly call users of m4RH had failed, and thus, SMS was the only option for collecting data from users. One thousand three hundred and ninety three new m4RH users were sent a series of five or six questions (the exact number varied) related to their background, knowledge of family planning, and use of contraception. The timing of the survey, the talk-time incentive offered for completing the survey, and access to the family planning service were randomized to estimate the impact on response rates. In addition, a subset of users was sent a second follow-up follow up survey.

We found that 39.5 percent of users began the first survey, and 20.9 percent of users completed all questions on the first survey. In contrast, 28.4 percent of users began the second survey, and 14.1 percent of users completed the second survey. The vast majority of responses received were properly formatted and comprehensible. Survey timing, survey incentives for completion, and access to treatment had no effect on the response rates for either the first or second surveys. Furthermore, the very similar response rates between users provided full access to the m4RH service, and those provided only limited access to the m4RH suggest that SMS may be an effective tool in gathering outcome data for impact evaluations of services like m4RH as differential attrition is unlikely to be a problem.

Overview of m4RH

M4RH is a free platform designed and implemented by FHI360 which provides information on several family planning methods over SMS. Users access the system by sending the text “m4RH” to the number 21222 and are sent back an SMS listing nine family planning methods along with a “keyword” for each. Users then SMS the keyword for the family planning method they want to receive information about and are sent a short, clear SMS with information about the method. Appendix A includes a sample SMS from m4RH. For more information about m4RH, see L’Engle et al. (2013).

Responses from the experimental surveys showed that m4RH subscribers are highly educated (90.7 percent report having received a secondary or higher education, compared with 42 percent among all adult Kenyans); young (the average age of survey responders was 25); and predominantly female (only 32 percent of respondents were men).

Methods

This study was conducted primarily for the purpose of providing input to the design of another study seeking to estimate the impact of the m4RH service. The preliminary design for the other study called for new m4RH users to be randomly assigned to either a treatment group, with full access to m4RH, or a control group, with limited access to m4RH, and for data to be collected via phone interviews. Previous attempts to conduct telephone interviews with m4RH users had failed with users indicating that phone calls were intrusive.

The current study was conducted to assess whether data for the impact evaluation could be collected via SMS. In particular, we sought to determine whether response rates would be sufficiently high and whether response rates varied between the treatment and control groups. In addition, we sought to determine what factors affect response rates on SMS surveys.

First Survey Experimental Design

Over the course of two months, 1,393 new users of m4RH were sent a short survey via SMS. The timing of the survey invitation, the incentive offered for completion of the surveys, and access to the m4RH service were randomly assigned in order to determine the impact of these factors on response rates. The timing of the survey invitation was either 3 hours after the new user first accessed the m4RH system or 8 AM on the day after the user first accessed the m4RH system. The incentive offered for completion of the survey varied between 50 ksh (around 0.5 USD) in talk-time, 100 ksh (around 1 USD) in talk-time, or entrance into a lottery 1,000 ksh (around 10 USD) in talk-time. Access to the m4RH service was set to one of two levels. Users assigned to the treatment access level were able to access the full m4RH service; users assigned to the control access level were only provided access to the list of family planning clinics in their region. (The terms treatment and control are used as these match the groups to be used in the other study.)

We estimate the impact of each variable on response rates in the first survey by performing OLS regression of response rates on binary variables for each value of the three variables described above (survey invitation timing, incentive type, and access level) with the 3 hour timing, 50 ksh incentive, and control access as the excluded base category and interaction terms for timing and incentive type. (Due to the random assignment strategy, we exclude interaction terms involving access level.) We include two measures of response: whether the user started the survey and whether the user completed the survey.

Follow-up Survey Experimental Design

Industry experts with experience conducting surveys over SMS we spoke to anticipated that a lottery might work for a single survey but would lead to lower response rates on follow up surveys (personal communication with Geopoll staff, October 8th, 2013). To test this premise, we conducted a second follow-up survey with a non-probability sample of 482 users who had been invited to participate in the first survey. (These 482 users were the first users to access the service, and thus, there was no correlation between being selected for the second survey and any of the factors tested in first survey.) Questions asked in the second survey were the same as in the first. The incentive offered for completion of the second survey was randomly assigned to be either 100 ksh in talk-time or entrance into a lottery.

The invitation for the second survey was sent to all 482 users at the same time and thus the elapsed time between the first and second surveys varied from 5 to 11 weeks. While the timing of the first survey was not randomly assigned but rather based on the date the user first accessed the system, it is unlikely that users vary substantially by date of first contact, and thus, we estimate the impact of elapsed time on response rates as well.

We estimate the impact of incentive type (both for the first survey and the second survey), access level and elapsed time between surveys on response rates in the second survey by performing OLS regression of response rates in the second survey on binary variables for whether first survey incentive type was lottery, whether the second survey incentive type was lottery, whether both survey incentive types were lottery, whether access level was “treatment”, and elapsed time between surveys in weeks.

All users who were offered a guaranteed talk-time incentive for completing the first survey and who completed the survey were delivered their talk-time well before the second survey.

Survey Questions

A secondary objective of this study was to test various question wordings and user response instructions. Four different sets of questions were developed with different format and wordings in each question set. For example, in one set of questions, the user was instructed to respond using a full word plus their answer to the question – e.g., “AGE 30”. While in another set of questions, the user was instructed to respond using only a single letter plus his or her answer to the question – e.g., “A 30”. Sets of questions were rotated on approximately a weekly basis. As users were randomly assigned incentives, survey invitation times, and treatment access on a per user basis and sets of questions were rotated on a weekly basis, there was no correlation between the set of questions sent the users and incentive type, survey invitation time, or treatment access. Appendix B contains several sample questions.

Results

Overall Response Rates

The first survey was begun by 39.5 percent of users, and 20.9 percent of users completed all questions on the first survey. Response rates to the second survey, which was administered 5 to 11 weeks after the users first accessed the m4RH service, were significantly lower. The second survey was begun by 28.4 percent of users, and 14.1 percent of users completed all questions on the second survey.

The vast majority of answers received were comprehensible. Out of the 1,653 answer responses received, 91.8 percent were correctly formatted and comprehensible, 7.8 percent were incorrectly formatted but still comprehensible upon manual inspection,[1] and 0.5 percent (7 responses) were not comprehensible. These rates did not vary significantly depending on the answer instructions.

Effect of Incentives, Survey Timing, and Access to m4RH on Response Rate

We find no effect of either survey timing, access to the full m4RH service, or incentive on response rates for the first survey. The F test of all the joint tests of all the parameters being equal to 0 is not rejected at the 10 percent level. Similarly, we see no effect of incentive type or access level on the response rate for the second survey either. Estimates of the impact of incentive type and access to m4RH on whether users started and finished the second survey are all small and not statistically significant at the 10 percent level. Our estimate of the impact of the elapsed time between surveys on whether users started the second survey is large and statistically significant at the 10 percent level, but this effect disappears when we look at the effect of survey timing on whether users finished the second survey.

Item Response

Individual item response may provide insight into whether specific questions are causing respondents to quit the survey. In addition, item response may be used to infer the relationship between the number of questions and response rate.

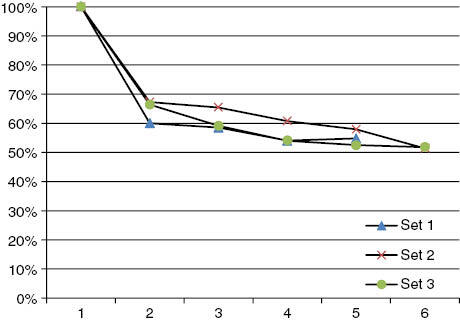

Industry experts with whom we spoke speculated that there is a nonlinear relationship between the number of items and response rates. In particular, the experts hypothesized that response rates would drop dramatically after the fourth or fifth question. Although we saw a large drop in response from the first to the second question, response rates decreased linearly after the second question. No single question appears to have caused a precipitous drop in response rates (Figure 1).

Timing of Survey Responses

A potential benefit of SMS surveys is that users may answer questions at their own convenience. In fact, few respondents did this. For the most part, users answered questions promptly or did not answer at all. Two thirds of responses were received within 5 minutes of the time the question is sent. (Delivery of SMSs in Kenya often takes one or two minutes, so even if users respond as soon as they receive the SMS question, the time between question delivery and response receipt could be 2 to 4 minutes.) A small portion (about 1/20th) of responses were received after one hour.

Discussion

SMS has great theoretical potential as a mode of data collection, especially in developing countries. SMS is inexpensive, may be easily automated, allows respondents to respond to questions at their own convenience, and, in many contexts, may be perceived as less intrusive than interviews conducted face-to-face or by telephone. Yet SMS suffers from several disadvantages, which include the need for literacy and the relatively limited length of messages.

To the author’s knowledge, this is the first study to assess the feasibility of directly administering a survey over SMS in a developing country. I conducted several experiments on users of a family planning mHealth service in Kenya to assess whether SMS is a feasible mode of gathering data from these users and what design features of an SMS survey lead to higher response rates. I find that, for the population in question, SMS is indeed a feasible mode of data collection. Overall response rates for the first survey, administered shortly after the users first initiated contact with the mHealth service, were 39.5 percent. Response rates on a second survey administered 5 to 11 weeks later were 28.4 percent. The vast majority of answers on both surveys were comprehensible. Access to the service itself, the timing of the survey invitation, and the type of incentive provided to the user for survey completion did not affect response rates on either the first or second surveys. These results suggest that SMS surveys may be a useful tool for gathering data on users of these services and can be administered without the necessity of expensive individual incentives.

Acknowledgements

This work was supported by the United States Agency for International Development under grant number GPO-A-00-09-00007-00. The author thanks Pam Riley, Randall Juras, Soonie Choi, Pam Mutua, the entire team at TexttoChange and Marcus Wagenaar in particular, FHI360 and Kelly L’Engle in particular, Ken Gaalswyk, and attendees at an internal Abt presentation.

References

Appendix A – Sample m4RH Content

General info on Implants: Implants are small rods placed under skin of woman’s arm. Highly effective for 3–5 years. Can be removed anytime. For married and singles. May cause light irregular bleeding. When removed, can become pregnant with no delay. No infertility or birth defects. Main menu reply 00. More information reply 12.

Appendix B – Sample Survey Questions

- How old are you? Reply with AGE and the number of years like AGE 25. Reply AGE SKIP to skip this question.

- What is your highest level of schooling? Reply SCHOOL 1 if no education, SCHOOL 2 if primary, SCHOOL 3 if secondary, SCHOOL 4 for higher.

- Thanks for your answer! Next question arriving shortly. Do you or your sexual partner use contraception? Reply USE 1 if yes or USE 2 if no. Reply USE SKIP to skip this question.

- Thanks for your answer! Next question arriving shortly. Can coil move to other parts of the body? Reply MOVE 1 if yes and MOVE 2 if no. Reply MOVE SKIP to skip question.

A few examples of such responses are “implants 1 for 6 months” rather than “implants 1,” “use + condoms” rather than “use condoms,” and “age 23 years old” rather than “age 23.”