Introduction

In many household surveys, respondents are asked to provide information about other members of their household as well as themselves (Bickart et al. 1990). This type of survey response, within-household proxy reporting, refers to the process by which one household member responds on behalf of other household members. Within-household proxy reporting offers several advantages relative to conducting a survey interview with each member of the household individually, including reduced costs due to fewer visits to the sampled household and faster data collection (Krosnick et al. 2015). While it is generally assumed that self-response leads to better data quality, this may depend on several factors such as the relationship between the household members and observability of behaviors (Menon et al. 1995; Tamborini and Kim 2013). The motivation and knowledge of a proxy respondent may differ from that of a target respondent, leading to differences in effort, recall strategy, and potentially error in responses provided by the proxy respondent (Bickart et al. 1990; Blair, Menon, and Bickart 1991). Fulton et al. (2020) provides a broader discussion of proxy reporting.

To evaluate the feasibility of proxy response for a given topic, Blair, Menon, and Bickart (1991) discussed using a cognitive interviewing approach to evaluate survey questions where two members from the same household (“pairs”) are interviewed. In this approach, the researchers ask an identical set of questions to both members of a pair and use think aloud and probing techniques to assess response strategies. This approach allows researchers to determine the match or convergence rates between self and proxy response for each question and assess how response strategies differ, if at all. In recent years, this paired cognitive interviewing approach has been used to explore proxy response for sexual orientation, gender identity, and health questions (Holzberg et al. 2019; Zuckerbraun, Allen, and Flanigan 2020). In this paper, we discuss design considerations and recommendations for studies using this cognitive interviewing approach based on our experience with two supplements to the Current Population Survey (CPS) on Volunteering and Civic Life and Computer and Internet Use. More details about the paired cognitive interviewing design conducted can be found in Fulton et al. (2020).

Designing a Paired Cognitive Interview Study

Recruitment and Screening

What types of participants should be recruited?

As with all cognitive interviewing studies, participants may be targeted because they are part of a specific subpopulation (e.g., teachers and homeowners) or because they have characteristics specific to the survey topic of interest (e.g., low levels of Internet usage). In paired cognitive interview studies, researchers also must consider how participants’ household relationships could influence how they answer questions on behalf of other household members. The social distance between two household members could influence proxy knowledge of specific survey topics (Pascale 2016), particularly those that are sensitive. There is greater agreement between self and proxy responses when household members communicate more (Kojetin and Tanur 1996).

Therefore, we recommend recruiting pairs with differing levels of social distance between them to learn more about the quality of proxy information provided, particularly if the survey questions are difficult or sensitive. This differs from the paired cognitive interviewing design of Blair, Menon, and Bickart (1991) who only looked at pairs who were married or who were living together as married. In our testing of the two CPS supplements, we recruited a mix of different related and unrelated relationships. For related pairs, we recruited spouses/unmarried partners and familial relationships such as parent/child, grandparent/child, and cousins. For unrelated pairs, we recruited roommates, housemates, and friends. Recruiting pairs from related households was easier than recruiting pairs from unrelated households for both studies; recruiting unrelated households required additional steps such as tailoring advertisements.

How many pairs of participants should be interviewed?

To understand the feasibility of proxy response, it is important to collect enough data to be able to understand how often pairs matched in their responses and what response strategies they used. This number can vary depending on how important it is to explore how proxy responses are affected by the social distance of the relationship. While there is no magic number for a paired cognitive interview study, we recommend interviewing at least ten pairs of participants (20 individuals). A number closer to twenty pairs (40 interviews) would be optimal to evaluate the quality of proxy response across different relationship types. This would allow for ten pairs of related people with different types of relationships and ten pairs of participants who are not related which we think is optimal to measure meaningful differences in the quality of proxy response. We interviewed 21 pairs for the Volunteering and Civic Life supplement (11 related and 10 unrelated) and 14 pairs for the Computer and Internet Use supplement (seven related and seven unrelated).

How should participants be screened?

The first participant should be screened to provide basic details on themselves and the other members of the household (e.g., relationship, ages, etc.). The researchers can then select which other person of the household should be part of the pair, or they can leave it to the screened participant to choose. This decision involves tradeoffs. If the participant chooses, they will likely choose the person most willing or available to participate, which will facilitate recruiting. However, the participant could select a household member who does not have characteristics of interest. We do not have evidence that participants were more likely to choose someone based on their relationship, but we did not have many households that were a mixture of both related and unrelated people.

Another decision is whether to screen the other person in addition to the first participant. If both people are screened, researchers can get an initial sense of how well self and proxy responses might align during the interviews and ensure that participants with desired characteristics are recruited via examining self-report responses. Initially, we only screened one member of the household for our CPS interviews. We learned quickly that the members of the pair were not always consistent in reporting of the characteristics of interest; we could not be confident that the second member of the pair actually had the characteristics of interest we were exploring.

Therefore, we recommend screening both members of the pair. We also recommend allowing the initially screened participant to choose the other member of the pair to screen. We feel that this improves participant engagement and encourages more pairs of participants to complete the study, which outweighs the negatives to this approach. While the participant may choose a member who does not meet the characteristics of interest, this can be assessed through screening. Having the participant ask a household member who may not be as interested to participate may be burdensome and therefore can risk not having the initially screened participant participate at all.

Scheduling and Conducting the Interview

How should pairs of participants be scheduled?

Researchers must decide how flexible to be in the times that are offered to participants and whether to use the same cognitive interviewer for both participants. Each of these design choices is associated with advantages and disadvantages. Interviews that are scheduled closely together (e.g., one after another on the same day) could increase the likelihood that both members of a pair will complete their individual interview. In our experience, when interviews were scheduled simultaneously or back-to-back on the same day both members typically would travel to the interview location together at the same time. However, limiting scheduling to this arrangement could reduce interest in participating if household members’ schedules differ greatly. A potential disadvantage of scheduling on separate days is that the first participant to complete an interview may tell the second participant what to expect in the interview therefore influencing their responses.

If the two interviews are scheduled in succession and conducted by a single interviewer, the interviewer will know whether the pairs’ responses do not match and can use information from the first interview to tailor probing questions for the second interview. This is important as the interviewer can understand why there may have been differences between the pairs’ responses. However, interviewers should be careful to phrase the probing questions neutrally and refrain from telling the second participant the first participant’s responses so as to not bias their feedback. It may also be helpful to ask these probes retrospectively at the end of the interview.

However, if an interviewer does two interviews in succession, interviewing fatigue can occur, especially with longer cognitive interviews. If the first interview goes over the allotted time, the interviewer may feel pressured to rush or cut portions of the second interview to end on time. Using two different interviewers will reduce the likelihood of interviewing fatigue and can allow for interviews to be scheduled simultaneously. If two interviewers are used and both interviews are conducted at separate times or days, the interviewer of the second person could use the knowledge of the first participant’s answers to probe about any discrepancies in the pairs’ responses.

Initially, we only conducted both interviews of the pair on the same day, back-to-back with the same interviewer; however, later in the interviewing period, we allowed interviews to be scheduled on separate days which aided recruitment and generally did not impact study completion. We found that having different interviewers for a pair did not impact data quality as we were still able to gather the necessary information for self and proxy response comparison and qualitative data from probing questions. We also allowed participants to be interviewed within a couple business days of each other which may have helped recruit additional unrelated participants who had differing schedules. We recommend allowing participants maximum schedule flexibility.

What type of probing is helpful to understand participants’ knowledge of other household members’ behaviors?

There are many types of probing questions a researcher can embed to understand how a proxy answered for other household members. In addition to asking the proxy how they came up with their answers for other household members, we recommend probing questions that focus on difficulty of response and confidence in answering. These can be either open-ended or closed-ended questions with a scale (e.g., “Very easy” to “Very difficult” and “Very confident” to “Not confident at all”). Asking an open-ended question may provide more detailed information about the participant’s response process and challenges they had answering as a proxy. However, asking only open-ended probes can present challenges in analyses as responses will need to be coded. Asking a closed-ended easiness/difficulty probing question can permit quicker comparative analyses.

In our studies, we asked open-ended probes about how the proxy came up with their answers, difficulty, and confidence in answering for the other household member. We found that coding these responses took a lot of time, so researchers should consider the value of open-ended versus closed-ended probing questions; we found that asking open-ended probes provided information about how the proxy answered for other household members (e.g., observing the other household members’ behavior or guessing) that we would not have learned in closed-ended probes. These open-ended probes were valuable for understanding the response process in related and unrelated pairs.

Analysis

What analytical methods should be used?

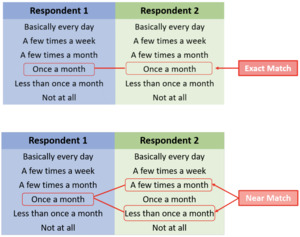

In addition to reviewing responses to probing questions, the alignment between participants’ responses should be examined for each question to calculate a match rate. If a survey question has a high percentage of exact matches, this may indicate that a proxy is able to answer for the other person. However, if a survey question has a high percentage of no matches, this could be a potential indicator of issues with data quality. While a yes/no question is easy to calculate a match rate for because it is a binary question, other questions may be more complicated. For a six-point scale question, an exact match occurs when both participants provide the same identical answer (see Figure 1, top panel). However, it is more difficult for participants to exactly match given there are more categories compared to a yes/no question. One solution to this is to calculate “near” matches, in which participants provide similar but not identical answers. This may be particularly useful if there is already a plan to collapse options for production data collection. For a six-point scale, a near match might be defined as a difference of one scale point (see Figure 1, bottom panel).

Questions with numeric open-ended answers could pose challenges as there can be a large range (e.g., number of volunteer hours per year), so researchers should consider combining these responses. We suggest creating ranges for near and exact matches, and re-running analyses to test the effect of this decision on results.

In our studies, yes/no questions had the highest exact match rate, followed by scale questions, and then numeric open-ended questions. This makes sense given that participants would have a 50% chance of answering correctly if they guessed randomly for a yes/no question and a lesser chance for scale questions and numeric open-ended questions. Overall, the average exact match rates ranged from 36% to 100% for yes/no questions (asked in both Computer and Internet Use and Volunteering and Civic Life), 23% to 55% for scale questions (asked in Volunteering and Civic Life only), and 21% to 30% for open-ended numeric questions (asked in Volunteering and Civic Life only). When considering both near and exact match rates, the ranges were 54% to 87% for scale questions and 41% to 44% for open-ended numeric questions. This was informative as it showed although pairs may not exactly match on these more complex questions, their responses may only differ by one scale point or category. This may suggest the proxy has some knowledge of the behaviors and activities of the other household member.

Some questions that had the highest match rates were on email and texting/instant messaging use, both of which were yes/no questions. This was not surprising given that these are popular forms of communication, not sensitive topics, and easily observable—members of the same household could communicate with each other on these platforms—and that they were both yes/no questions. Questions that had lower match rates of less than 50% were on accessing health records online (a yes/no question) and the number of hours spent volunteering (open-ended numeric question). Even though there was a 50% chance of answering the health records question correctly by guessing, this question may have had a low match rate because it was asking about a behavior that could be considered sensitive. Therefore, proxies may be unlikely to observe this behavior, which may explain the difficulty in answering and low match rate. It may not be a surprise that the number of hours spent volunteering had a low match rate given the open-ended question type and that volunteering may be difficult to directly observe.

In our testing of the two CPS supplements, we did not see many differences in match rates between related and unrelated pairs (Fulton et al. 2020). In fact, for both studies, unrelated pairs matched at a slightly higher rate than related pairs. We suspect this is because the questions tested on the Computer and Internet Use supplement were not particularly difficult to observe or answer and questions on the Volunteering and Civic Life supplement were generally not sensitive to answer overall. We also did not observe differences in response strategies between related and unrelated pairs in answering questions.

Discussion

We found the paired cognitive interviewing design to be successful in evaluating the feasibility of a participant answering survey questions as a proxy for other household members. Recruiting pairs of participants from related and unrelated households and identifying survey questions that had high or low match rates provided useful insight into proxy response data quality. We also found that allowing maximum schedule flexibility did not seem to impact study completion. Embedding a series of probing questions that captured how the proxy answered for the other household member was useful. Finally, we found that calculating “near” matches for scale questions with more than two options was beneficial in understanding if pairs were similar in their responses.

We recommend embedding closed-ended probing questions that ask about easiness/difficulty and confidence level in answering specific survey questions. These probes can help researchers understand whether these perceptions and response strategies have any association with data quality. It is possible that higher perceived easiness or higher confidence predicts higher likelihood of correct matches between the proxy and self-response. Katz and Luck (2020) found that there may not be a relationship between perceived easiness/difficulty and correct match rates, but this remains an area for future research. Future research should continue examining how the relationship of the paired participants, response process errors in comprehension, recall, judgment, and response mapping, and the extent to which participants have knowledge of or observe proxy behaviors affect proxy responses.

We found that within-household proxy reporting can be an effective method for collecting data on behalf of other household members when they are not available to respond. However, researchers should consider the relationship, observability, and the type of question (yes/no, scale, and open-ended numeric) when deciding whether to allow proxies. We found that simple yes/no questions that ask about easily observed behavior (text messaging, email) were more effective than complex question types that ask about a behavior not as easily observed (the number of hours volunteering). We also found that match rates did not differ much between related and unrelated pairs, perhaps because of the nature of the questions asked in these supplements.

Using what we learned from our experience with paired cognitive interviewing, we incorporated many elements of this design for pretesting of two additional Current Population Survey supplements in 2021, which were conducted remotely via telephone because of the COVID-19 pandemic. As remote cognitive testing potentially becomes more commonplace, future research should examine the effectiveness of paired cognitive interview testing in a remote testing format compared to in-person testing.

Note

This presentation is released to inform interested parties of research and to encourage discussion. The views expressed are those of the authors and not those of the U.S. Census Bureau. The paper has been reviewed for disclosure avoidance and approved under CBDRB-FY20-434.